|

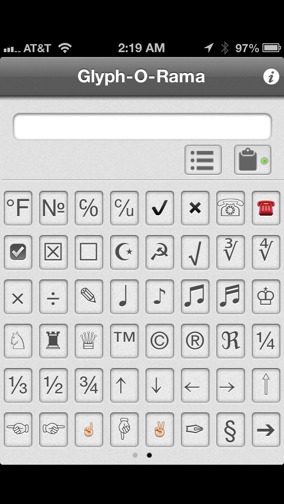

Go to Home tab, in the Font group, change the font to Wingdings (or other font set). If the code for the character you want is shorter than four digits, add zeros to the beginning to get to 4 digits. All ASCII character codes are four digits long.

Ascii Character Set Finder Go Up Series Of Articles8 Comments 1 Solution 424 Views Last Modified. Fonts Typography Visual Basic Classic. This first article tackles the format that all text in OS X uses: Unicode.Convert Mac character set textfile to ASCII. Depending on your Mac model, you can also set an option in the Keyboard pane of Keyboard System Preferences to access the Character Viewer by pressing the Fn key or (if available on the keyboard).This series of articles explores how your Mac handles text, how you can get the best out of that, and how you can fix any problems that arise with text. To open the app, you can go to Finder > Applications > Utilities > Activity.Text and numbers were the first types of data to be widely used by computers, and no matter whether your Mac is your graphic design tool, your image or movie editor, or your main musical instrument, you cannot but help working with text.In an app on your Mac, choose Edit > Emoji & Symbols, or open the Character Viewer from the Input menu (if you set the option in Keyboard preferences).

Old binary formats may require you to go back to the original app which created them (virtualisation can be helpful here!). How can you open it properly?Solution: Establish its likely origin, and if possible the codepage that it uses. Because this was a common problem in Japanese, it has become known by its Japanese term as ‘mojibake’.Problem: You open an old document only to discover its has ‘mojibake’. However if you try opening it using a codepage known as MIK, the content will be rendered as unintelligible garbage. For example, Cyrillic (including Russian) text might be encoded using the KOI8 codepage, just one of several widely used. To open a Word-JP document you really had to use the Japanese version of Word, as another word processor might be unable to cope with the encoding, and all you would then see was gibberish.You may still come across such old text, whose encoding is based on a ‘codepage’ which defined the binary codes used for each of the characters available. And UTF-32 respectively.UTF-8 is potentially the most compact and efficient, as it maps the old ASCII Roman character set into single byte codes. These are known as UTF-8, UTF-16. Use TextSoap to clean up remaining mess in text files, with its built-in and custom scripts.A cross-platform standard launched in 1991 and currently in version 7.0.0 (revised 16 June 2014), Unicode offers a lot of flexibility in the encoding of text, with a choice of 1, 2, or 4 byte (8, 16, or 32 bit) encoding schemes. Text Encoding Converter allows you to preview text using different codepages, to work out which to use to convert the file to Unicode. TextSoap to clean up ligatures and other mess. Even current HTML and PDF documents may still not use Unicode in those cases you may need to open the document and export as text, then try converting the codepage.Tools: Text Encoding Converter, Encoding Master or Peep to convert between codepages. Different processor architectures order bytes in one of two ways, little- or big-ended, depending on whether the most significant (largest) byte comes first. This is useful, as you should see every fourth byte in UTF-32 set to 00, making it easy to recognise and correct for errors.There is one final twist with Unicode: whether it is ‘little-ended’ or ‘big-ended’. In fact Unicode currently does not require all 32 bits of the full encoding, and only uses 21 bits, up to its highest code of 10FFFF in hexadecimal. UTF-32 is the least efficient in terms of required storage, as every character requires the full 4 bytes, but is normally the quickest to process. It is also in many cases slightly slower to handle that the other formats, because of the more elaborate software algorithms required to encode and decode.UTF-16 is something of a compromise format between the 8-bit and 32-bit schemes.

If the site is that important, try downloading it as raw HTML and work on that offline.When you are writing HTML, directly or using a more friendly editor, you must ensure that its headers assign the coding scheme explicitly, or in meta tags. Switching fonts in your browser could also help render the content properly, but you are largely going to have to work by guesswork. Although these are fading away, if you do stumble across one, you can only try the tools listed to make the best sense possible. The two major offenders now are HTML and PDF.Many older websites still use 8-bit text, and a few still use old codepages to handle non-Roman scripts. UnicodeChecker shows you the whole Unicode character set, if you have the fonts to support it all.Why are there still problems with text encoding?Despite Unicode having been around for so long, and being supported by OS X and Windows for many years, not all apps and formats have moved with the times. (Note that the characters shown here will only render properly in your browser if you have a suitable font enabled to support them.)You can explore Unicode encoding and related information using UnicodeChecker. Top programs for macAlthough you can try fiddling with the codepage and character set, it is usually simpler to export the contents as text and work on those using codepage and text tools. Most documents still being written to PDF files adhere to older versions of the PDF standard which may not cross encoding schemes intact. Remember that your readers may be working in a completely different script system and language, so their browser needs to be told properly how to render your words.PDF can be much more tricky. Sometimes it is simpler just to scan in a printed copy of the document and use OCR, or cheaper to get the document retyped straight into Unicode.What is the best text editor to use for this?There are probably more text editors available than any other class of app. To get those to render correctly, you may need to install the original font. If you are struggling to find the right font, try DejaVu which has almost universal cover of Unicode characters.Some of the worst problems remain with documents created in Eastern Bloc countries, which often used unique codepages tied to certain fonts produced by local foundries. Archival work should comply with PDF/A-1a or /A-2u at the least, to ensure that every character stored must have a Unicode equivalent.Sometimes the problem lies not so much with the text encoding, but in the lack of suitable fonts to support that text.

0 Comments

Leave a Reply. |

Details

AuthorLen ArchivesCategories |

RSS Feed

RSS Feed